School of Psychology - Georgia Institute of Technology

Overview

Most

of what we hear is due to sound waves traveling through the air. Sound waves

travel through the outer and middle ear before arriving at the cochlea in

the inner ear. Sound waves can also get to the cochlea through direct vibration

of the bones in the head. This pathway of sound is known as "bone conduction". The

vibrations carry the sound waves to the inner ear, and set up waves in the

cochlea just like the waves that are set up by air conducted sounds.

Most

of what we hear is due to sound waves traveling through the air. Sound waves

travel through the outer and middle ear before arriving at the cochlea in

the inner ear. Sound waves can also get to the cochlea through direct vibration

of the bones in the head. This pathway of sound is known as "bone conduction". The

vibrations carry the sound waves to the inner ear, and set up waves in the

cochlea just like the waves that are set up by air conducted sounds.

"Bonephones" are headsets that create vibrations against the head,

in order to transmit sound to a listener via bone conduction. The term "bonephones"

is generally used to describe modern lightweight bone-conduction headsets

that are designed for applied use with auditory displays, rather the traditional

bone-conduction vibrators used in clinical audiology settings. Bonephones

are distinguished from clinical devices by their potential for stereo presentation of sounds, their

small size, comfort, and standardized input jack.

"Bonephones" are headsets that create vibrations against the head,

in order to transmit sound to a listener via bone conduction. The term "bonephones"

is generally used to describe modern lightweight bone-conduction headsets

that are designed for applied use with auditory displays, rather the traditional

bone-conduction vibrators used in clinical audiology settings. Bonephones

are distinguished from clinical devices by their potential for stereo presentation of sounds, their

small size, comfort, and standardized input jack.

Utility of Bonephones

In comparison to headphones, using bonephones avoids covering the ears of

the listener. This is important if (a) the listener needs to have the ears

unobstructed (to allow them to hear other sounds in the environment), or (b)

to allow them to plug the ears (to prevent hearing damage from loud sounds

in the environment). We have also demonstrated the potential utility of bonephones

for the SWAN audio navigation system.

That study was reported at ICAD 2005. <ICAD

Paper in PDF>

In comparison to headphones, using bonephones avoids covering the ears of

the listener. This is important if (a) the listener needs to have the ears

unobstructed (to allow them to hear other sounds in the environment), or (b)

to allow them to plug the ears (to prevent hearing damage from loud sounds

in the environment). We have also demonstrated the potential utility of bonephones

for the SWAN audio navigation system.

That study was reported at ICAD 2005. <ICAD

Paper in PDF>

Here are some other advantages of bonephones:

Research Program

Since bonephones are relatively new, there is a lot of research that needs to be done to understand how well we hear with them, how effective they are at presenting different sounds, and their abilities to present stereo and spatialized ("3D") audio signals. Research in the GT Sonification Lab, led by Ray Stanley, is addressing all of these issues.

Audibility Thresholds

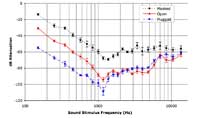

We

examined the hearing thresholds for pure tones at a wide range of frequencies,

with (1) the ears open, (2) the ears plugged with foam earplugs, and (3) the

ears open in the presence of background noise. The initial findings were presented

at ICAD 2005. <ICAD

Paper in PDF> The key findings are summarized in the graph to the right.

We

examined the hearing thresholds for pure tones at a wide range of frequencies,

with (1) the ears open, (2) the ears plugged with foam earplugs, and (3) the

ears open in the presence of background noise. The initial findings were presented

at ICAD 2005. <ICAD

Paper in PDF> The key findings are summarized in the graph to the right.

Spatial Audio

Slight timing and intensity differences are crucial in detecting the location of a sound source. Spatialized audio presentation relies on the ability to present different signals to the two ears, in order to mimic these interaural time and intensity/level differences (ITDs and ILDs). Many have suggested that stereo separation is not possible using bone conduction. However, we have shown that it is possible.

We used the Coordinate Response Measure (CRM) to show sensitivity to ILDs and ITDs. In this task, participants identified a target speech signal lateralized with ITDs or ILDs among masker speech. The performance on this task improved as a function of ILDs an ITDs delivered through the bonephones.

The initial results were presented at Human Factors (HFES 2005). <HFES Paper in PDF>

Subjective Impression of Lateralization

The first step in spatialization (3D audio) is lateralization (stereo separation or panning). We wanted to show that this is possible, and to examine the amount of lateralization that can be achieved with bonephones.To test this, we had participants adjust an indicator on a model head to show the lateralization of a sound source traveling through headphones or bonephones.We found that participants achieved as much lateralization through bonephones as through headphones.

HRTFs for Bonephones

As we continue toward developing a true 3D audio impression with bonephones, we are working on a "Bone Related Transfer Function" or BRTF, that will be a function we can apply to an HRTF, in order to make sounds intended for headphones work with bonephones. To begin establishing BRTFs, we used a cancellation methology to generate a set of bone-to-air shifts that can be used to form a BRTF. In this task, participants adjusted the phase and amplitude of tones at a given frequency until cancellation ocurred. One tone was delivered through headphones and one through bonephones. This work is the main topic of Ray Stanley's Masters Thesis.

Speech Intelligibility via Bone Conduction

In collaboration with Dr. Andrzej Przekwas and colleagues at CFD Research Corp. in Huntsville Alabama, we are studying speech intelligibility for sounds presented via bone conduction and air conduction.

Publications Relating to the Research

(See the Publications page for all Sonification Lab publications.)

2009

Stanley, R. M., & Walker, B. N. (2009). Intelligibility of bone-conducted speech at different locations compared to air-conducted speech: Evaluation of bone-conduction transducers for use in radio communications. Proceedings of the Annual Meeting of the Human Factors and Ergonomics Society (HFES2009), San Antonio, TX (19-23 October). pp. TBD. <PDF>

2007

Walker, B. N., Stanley, R. M., Przekwas, A., Tan, X. G., Chen, Z. J., Yang, H. W., Wilkerson, P., Harrand, V., Chancey, C., & Houtsma, A. J. M. (2007). High Fidelity Modeling and Experimental Evaluation of Binaural Bone Conduction Communication Devices. Proceedings of the 19th International Congress on Acoustics (ICA 2007), 2-7 September 2007, Madrid Spain. <PDF>

2006

Stanley, R. M., & Walker, B. N. (2006). Lateralizaton of sounds using bone-conduction headsets. Proceedings of the Annual Meeting of the Human Factors and Ergonomics Society (HFES2006), San Francisco, CA (16-20 October). pp. 1571-1575. <PDF>

2005

Walker, B. N., & Lindsay, J. (2005). Navigation performance in a virtual environment with bonephones. Proceedings of the International Conference on Auditory Display (ICAD2005), Limerick, Ireland (6-10 July) pp 260-263. <PDF>

Walker, B. N., & Stanley, R. (2005). Thresholds of audibility for bone-conduction headsets. Proceedings of the International Conference on Auditory Display (ICAD2005), Limerick, Ireland (6-10 July) pp 218-222. <PDF>

Walker, B. N., & Stanley, R., Iyer, N., Simpson, B. D., & Brungart, D. S. (2005). Evaluation of bone-conduction headsets for use in multitalker communication environments. Proceedings of the Annual Meeting of the Human Factors and Ergonomics Society (HFES2005), Orlando, FL (26-30 September). pp. 1615-1619. <PDF>

Walker, B. N., Stanley, R. M., & Lindsay, J. (2005). Task, user characteristics, and environment interact to affect mobile audio design. Proceedings of the International Workshop on Auditory Displays for Mobile Context-Aware Systems at Pervasive2005, Munich, Germany (11 May). <PDF>

Products Using Bone Conduction

Acknowledgments

Many people have helped us and continue to help us conduct this research.

Dr. Doug Brungart and Dr. Brian Simpson - collaboration on CRM project, general methodology for adapting HRTFs, and notch filters.

Dr. Adrian Houtsma and Dr. Barbara Acker-Mills - equipment loan and expertise in measurement of acoustic stimuli.

Dr. Dennis Folds and Dr. Brad Fain - equipment loan for measurement of bone-conducted and air-conducted waves.

Dr. Andrzej Przekwas and colleagues at CFD Research Corp. in Huntsville Alabama, for their amazing 3D models of the human head.

This research has been supported, in part, by grants from the US Army. Any opinions, findings, and conclusions or recommendations expressed in this material are those of the author(s) and do not necessarily reflect the views of the funding agencies or sponsors.

Bone conduction work at other institutions

Chalmers Room Acoustics Group (CRAG) - Professors Mendel Kleiner and Bo Håkansson, Aleksander Väljamäe

Contact Us:

Georgia Tech Sonification Lab

Bruce Walker

Ray Stanley